The explosive growth of Artificial Intelligence (AI) is fueling a computational arms race, driving massive capital investments into data centers. From OpenAI’s multi-billion dollar funding rounds to global initiatives deploying advanced LLMs, the demand for compute power is unprecedented. However, this AI boom faces a critical, often overlooked bottleneck: **heat management**. Traditional data centers, designed for lower-density workloads, are rapidly reaching their thermal limits. To sustain the next generation of AI compute, the industry must execute a fundamental architectural shift toward advanced liquid cooling.

The Thermal Crisis of AI Compute Density

Modern AI accelerators—such as high-end GPUs and TPUs—are incredibly powerful, but they generate immense amounts of waste heat. When these components are packed into standard racks, the resulting power density can easily exceed 30 kW/rack, and future AI models are projected to push this past 50 kW/rack. Traditional air cooling systems, which rely on circulating cool air to dissipate heat, simply cannot keep up with this exponential thermal load. The physics of air cooling dictate a ceiling that the demands of advanced AI workloads have already surpassed.

The shift from air-cooled to liquid-cooled infrastructure is not merely an upgrade; it is a **prerequisite** for deploying next-generation, high-density AI workloads. It represents the most significant infrastructure pivot since the advent of the cloud.

Why Liquid Cooling is the Only Solution for AI Scale

Liquid cooling methods—specifically Direct-to-Chip (D2C) and Immersion Cooling—offer vastly superior thermal transfer efficiency compared to air. These methods directly interface with the heat source, whether it’s the chip itself or the entire server, allowing for unprecedented power density.

Direct-to-Chip (D2C) Cooling

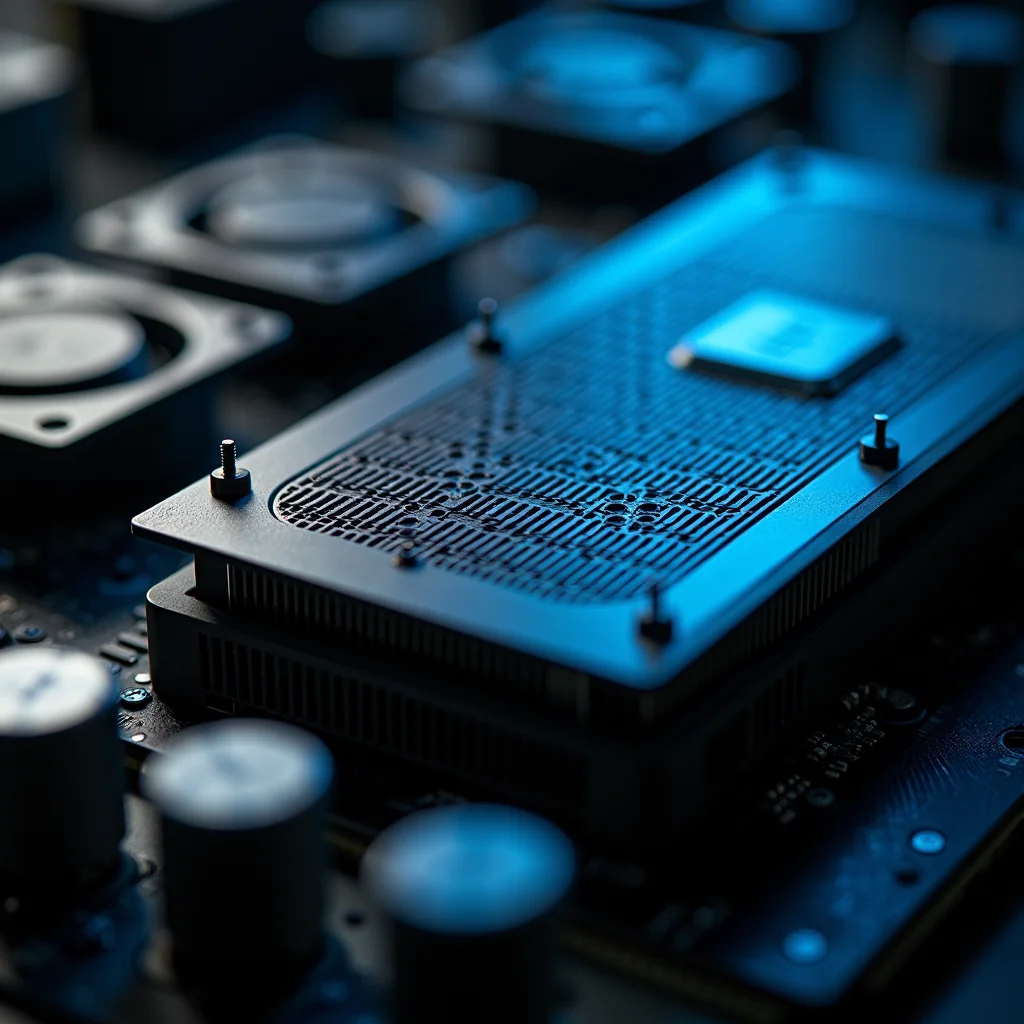

D2C involves circulating coolant directly to the hottest components (like the GPU die or CPU package) via cold plates. This method is highly effective for targeted cooling and is ideal for retrofitting existing high-density racks. It allows data center operators to manage heat at the source, maintaining operational efficiency even as power density climbs.

Immersion Cooling

Immersion cooling involves submerging the entire server or rack into a non-conductive dielectric fluid. This method offers the highest level of thermal management, cooling all components simultaneously. It is particularly advantageous for massive, hyperscale AI deployments where maximizing power density and minimizing physical footprint are paramount.

The Infrastructure and Skill Shift

The transition to liquid cooling requires more than just plumbing; it demands a complete overhaul of the data center’s operational architecture. DevOps and infrastructure teams must now master concepts like Coolant Distribution Units (CDUs), manifolds, and fluid dynamics. This creates a new, specialized skill gap, elevating the importance of **thermal engineering** alongside traditional compute expertise.

The massive capital investments pouring into AI infrastructure—signaling global confidence in the technology—are directly accelerating the adoption of these advanced thermal solutions. Companies are willing to pay a premium for the capability to handle extreme power densities, making liquid cooling an immediate business necessity.

For data center architects, the focus is shifting from ‘compute availability’ to ‘thermal capacity.’ Mastering these advanced cooling techniques is the defining technical challenge of the decade.

For further reading on industry standards, check out the Green Technology Cooling Trends Report. For a deeper dive into the underlying physics and power requirements, consult the Advanced Liquid Cooling Technical Guides.